A Simple Electronic-Music Sequencer for Silverlight 3

July 12, 2009

Roscoe, N.Y.

The audio support in the Silverlight 2 MediaElement is limited to WMA and MP3 files. I always thought the omission of WAV file support to be a bit peculiar. After all, at some point during playback, the WMA and MP3 files are decompressed to the pure audio samples that comprise WAV files, so why not accept WAV files directly?

It's not that I wanted to play actual WAV files in a Silverlight app. No, I had something a little more ambitious in mind. I thought it might be fun to generate audio data dynamically and feed it into a MemoryStream attached to a MediaElement through the SetSource method. This would make possible an interactive electronic music synthesizer right on a web page. But the omission of WAV file support from Silverlight 2 was a big problem: I'd have to generate the audio data, and then convert it to either WMA or MP3, which involves lots of messy code and license issues.

In Silverlight 3, however, we have a solution. You still can't attach a WAV file or stream directly to a MediaElement, but you can derive from MediaStreamSource and attach it to the MediaElement and funnel either a WAV file or WAV data into that.

I don't think I'd want to figure out how to derive from MediaStreamSource on my own. Fortunately there are a couple of pioneers who have posted solutions. Gilles Khouzam demonstrated how to play WAV files in a blog entry entitled Playing back Wave files in Silverlight and Pete Brown demonstrated real-time sound generation in Creating Sound using MediaStreamSource in Silverlight 3 Beta.

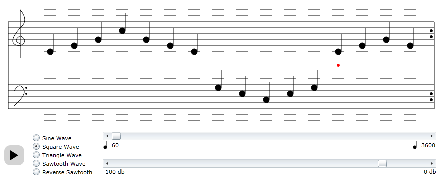

With those preliminaries done for me, I was able to slap together a primitive sequencer for Silverlight 3. (A sequencer is a electronic music instrument that plays a sequence of notes repetitively.) You can run it from here:

www.charlespetzold.com/silverlight/SimpleSequencer/SimpleSequencer.html

The button at the lower-left plays and pauses. Use the mouse to drag notes up and down the two staves, from two octaves below and two octaves above middle C. Press Shift and click a note to toggle between sharp, flat, and natural. Radio buttons give you a choice of simple waveforms. The top scrollbar controls the tempo; the bottom one controls the volume.

Very little testing has been done with this program. I'm not even sure the pitches are correct, and I'd be shocked if I got the sharps and flats logic right! The sounds I'm generating in this thing are just naked waveforms without any filtering or processing or envelopes. Obviously getting better-sounding sounds is high on my agenda of enhancements.

Although I am overjoyed I can now generate music in real time in Silverlight, there are some problems, in particular a very noticable latency issue. Internally, somebody (MediaElement?) wants a very comfortable buffer, so it won't start playing anything until it has that buffer, and if you change something dynamically, it won't be reflected in playback immediately. I suspect this problem will eliminate any type of interactive playing of music via the computer keyboard, but I'm curious to see how far I can get otherwise.

The source code is here. When you derive from MediaStreamSource and attach it to a MediaElement, it's going to want data at the rate of 176,400 bytes per second (for a 44,100 sample rate, 16-bits per sample, and stereo — half that for my mono sequencer), and one way to do this is to set up a pipeline that delivers this data on demand. My MediaStreamSource derivitive requests samples from a class called Attenuator, which controls the volume, which then requests samples from the Sequencer class, which controls the note-to-note sequencing, which then requests samples from the Oscillator class, which actually generates the waveform data. All timing in the program is based on those original requests.

As I get deeper into this stuff (and if people seem to be interested), I'll discuss some of the principles of digital electronic-music synthesis in upcoming blog entries.

This looks like great fun, and I'm only getting started.