SpinPaint for Windows Phone 7 (with a Rant about Touch)

May 21, 2010

Roscoe, N.Y.

Last week I had the opportunity to attend a two-day class on Microsoft Surface programming conducted by Dr. Neil Roodyn, and I enjoyed it immensely. Although we experimented mostly with the PC-based simulator included with the Surface SDK, we also had the opportunity to deploy our programs on actual Surface machines, which was a big thrill.

On the second day of class, during my morning walk from my apartment in New York City to the Microsoft offices in midtown, I had an idea for a small Surface program, and by mid-afternoon, after two false starts, I was able to get it working and deployed on one of the Surface machines in the classroom.

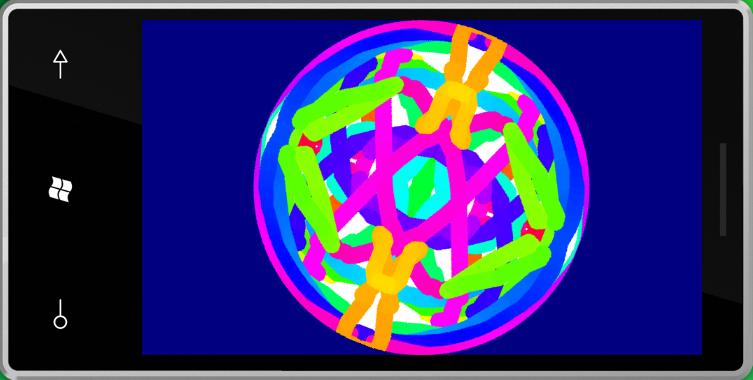

Imagine a disk that spins at 12 RPM. Touch the disk to paint with your finger (or fingers or palm or whatever). You can control the paint by moving your finger or by just letting the disk spin. To make the visuals more "interesting," the paint color cycles through the rainbow every 10 seconds, and anything that you paint is duplicated in mirror images in all four quadrants of the disk.

I was very pleased that the program seemed to satisfy the requirements of a Microsoft Surface application: It was simple to understand and use, and it was communal. Multiple people could sit around the Surface machine and add to the "art."

Here's the FingerPaint source code. I haven't attempted to clean it up; the code is as I left it last Friday. Of course you'll need the Surface SDK to compile and run it, and a Microsoft Surface machine to run it as it was intended to be run.

You can write programs for Microsoft Surface using either WPF or XNA, and Surface extensions are provided to get at the really cool features. I chose WPF for this application. The spinning disk is really a RenderTargetBitmap, and almost everything in the program occurs in a CompositionTarget.Rendering event handler, which is called in synchronization with the video refresh.

Inside a Microsoft Surface unit, touch information is obtained through five cameras underneath the screen that are sensitive to reflections of infrared light. Fingers touching the screen are resolved to ellipses; the size and orientation of the ellipse roughly indicates the pressure of the finger on the screen and how the finger is turned. The Contacts class — one of the Surface extensions to WPF and XNA — allows a program to obtain touch state information at any time. My FingerPaint program gets this information in the CompositionTarget.Rendering handler, and then uses that to render Ellipse and Path elements on the bitmap.

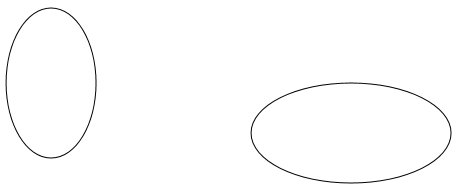

In the general case, two successive calls to the static Contacts.GetContactsOver method will obtain two ellipses with rotation information to indicate how a finger moved:

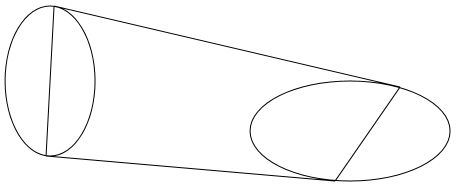

In real life, these ellipses will probably be much closer and very often will overlap. Regardless, a painting program needs to render these two ellipses as well as the quadrilateral connecting the ellipses on the RenderTargetBitmap:

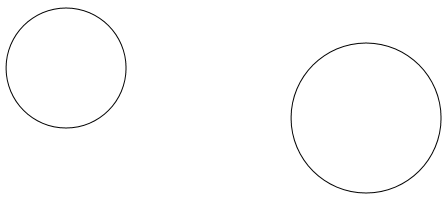

While coding this program last Friday, I quickly determined that connecting two arbitrarily oriented ellipses with a solid filled line was algorithmically a little too complex to work out while sitting in a classroom, so I averaged the two ellipse axes to turn the ellipses into circles, possibly of different sizes:

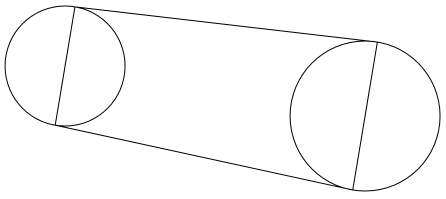

This simplified the job of calculating the vertices of the connecting quadrilateral immensely, reducing it to rudimentary vector manipulation:

Surface and Windows Phone 7 are at opposite extremes of the Microsoft computing continuum. Surface is a large communal computer; like other smartphones, Windows Phone 7 is a small and extemely personal computer — in many ways much more personal than a desktop computer. Yet, both machines are linked through the interface of touch. Multi-touch is the primary means of user input for both Surface and Windows Phone 7. (Amazingly, the pixel dimensions of the screens are in the same ballpark: the Microsoft Surface screen is 1024 × 768, and the larger of the two screens for Windows Phone 7 is 480 × 800.)

Porting my FingerPaint program from Microsoft Surface to Windows Phone 7 (and in the process renaming it to SpinPaint) was an "interesing" experience. Windows Phone 7 doesn't support WPF, of course, but it does support Silverlight, which is pretty much identical to WPF except for the hundreds of ways in which it's different. Silverlight doesn't have RenderTargetBitmap but it does have the equivalent WriteableBitmap. Everything looked good.

But then I got slapped in the face. I realized I couldn't do it because of the weird way in which touch support has metamorphosed in Silverlight and Windows Phone 7.

Some background: As you know from reading my article "Finger Style: Exploring Multi-Touch Support in Silverlight in the March 2010 issue of MSDN Magazine, Silverlight 3 introduced the Touch.FrameReported event. This event provides low-level touch input in the form of down, move, and up actions.

Anyone with previous working knowledge of WPF and Silverlight routed events who encounters Touch.FrameReported will undoubtedly understand that it's only a preliminary and interim touch interface. The hope and expectation was that Touch.FrameReported would be replaced in the near future with something that fits in better with the other routed events.

We now have that. Silverlight for Windows Phone includes three routed events — ManipulationStarted, ManipulationDelta, and ManipulationCompleted — that are a subset of the Manipulation events supported in WPF 4.0. (I'm in the process of writing a series of columns about the WPF versions of the Manipulation events that will appear beginning in the August issue of MSDN Magazine.) These Manipulation events basically resolve one or more touch actions on a particular element into transforms. They are very powerful and we are very lucky to have them, despite the fact that the Manipulation events in Silverlight for Windows Phone are not nearly as versatile as the WPF versions.

However — and this is the rant I promised — when the Manipulation events came into Silverlight for Windows Phone, the Frame.TouchReported event was tossed out, and now we have no low-level touch support. This is a serious problem! The Manipulation events are great for what they do, but they represent only one particular way in which touch can be used. If Microsoft truly wants innovative applications for Windows Phone 7, we developers must have the option of using touch in a very flexible manner, and this requires low-level support.

There has been some talk about restoring Touch.FrameReported to Silverlight for Windows Phone. (It actually still exists in an inoperative form.) But at this point I don't want it back. What we really need in Silverlight are the TouchDown, TouchMove, TouchUp, TouchEnter, and TouchLeave routed events that are also supported in WPF 4. These events give us low-level support while fitting right into the routed event architecture. It's the only solution that makes sense.

Without low-level touch support, I could not port my simple WPF Surface program to Silverlight for Windows Phone. Instead, I had to rewrite it in XNA. (Of course that re-inforces my belief that Windows Phone 7 developers should be adept with both Silverlight and XNA. You never know when a crucial feature is missing in your first choice and you need to abandon one API for another.)

XNA has low-level touch support via the static TouchPanel.GetState method. What XNA lacks is any way of directly drawing circles and arbitrary polygons on a bitmap! This is an annoying deficiency, but it's something that can be compensated for with code. (But once low-level touch input is removed from an API, there is no compensation.)

Am I allowed a second rant? In the March CTP of the Windows Phone 7 tools, the XNA TouchLocation structure had a property named Pressure, which is a float ranging from 0 to 1. I can personally attest that this property reported meaningful information when coding for the Zune HD using XNA Game Studio 3.1. However, with the April CTP of the Windows Phone 7 tools, the Pressure property is gone! So now, even when using the low-level touch interface in an XNA program, we can't get pressure information. I am stunned.

Let me put this bluntly: Multi-touch is the most important user-input paradigm since the mouse; Windows Phone 7 is likely to be the most popular Microsoft product that implements multi-touch; yet, in successive iterations of the Windows Phone 7 programming tools, we application developers are systematically losing our ability to use multi-touch in a flexible and innovative manner. This trend must be reversed.

At any rate, I did the best I could. Here's the XNA source code for SpinPaint for Windows Phone 7 that you can compile and run on the Windows Phone 7 emulator using the April CTP:

Someday, I hope to see it running on an actual phone.

Programming Windows Phone 7 Series

Free ebook to be published later this year. Preview excerpts available now.